Breaking Language Barriers: How We Built an AI-Powered Translation System for Global Software

By Muhammad Chhota Senior Solution Architect at Accucia

Muhammad Chhota is a Senior Solution Architect at Accucia with 10 years of experience building production-grade software systems. He specializes in SaaS architecture, AI integration, and solving complex engineering challenges at scale.

The Wake-Up Call

Ten years in software engineering has taught me many lessons, but one stands out: assuming everyone understands English is a critical mistake.

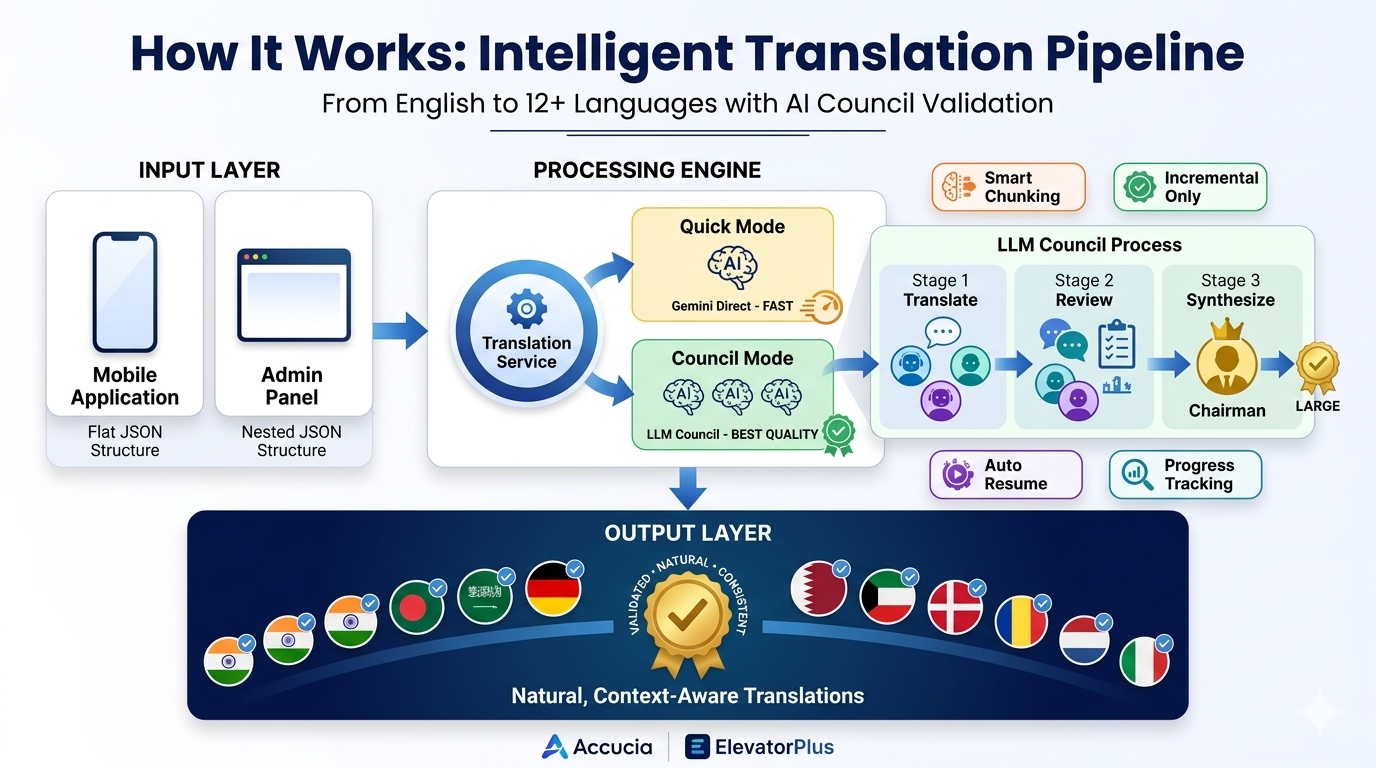

At Accucia, we built ElevatorPlus - a comprehensive SaaS platform used by elevator companies worldwide. What started as a regional solution quickly scaled globally. Today, we serve customers in Germany, France, South Africa, the Middle East, Vietnam, and beyond.

Our platform has two main components:

- Mobile Application - Used by elevator technicians in the field (often non-technical workers with varying education levels)

- Admin Panel - A web-based dashboard for management to oversee business operations

The reality hit us when we visited our customers abroad.

A technician in Vietnam stared at his phone, confused by "Annual Maintenance Contract." A German building manager struggled to navigate the admin panel, clicking through menus trying to decipher English terms. In South Africa, an operator squinted at the screen, trying to make sense of "Confirm" and "Submit."

The software worked perfectly. The features were solid. But the language barrier made it feel broken.

We had built a world-class platform that half our users couldn't fully understand.

That's when we knew we had to solve the translation challenge - and do it right.

The Challenge: Scale Meets Quality

Here's what we were facing:

- Over 3,000 translation keys in our mobile application

- 2,500+ keys in our React admin panel

- 12+ languages needed (Hindi, Gujarati, Marathi, Tamil, Telugu, Kannada, Bengali, Arabic, German, French, Portuguese, Vietnamese)

- Constantly evolving software - new features mean new text every week

- Industry-specific terminology - elevator industry has unique terms that general translation tools butcher

We needed translations that were:

- Natural - How people actually speak, not textbook language

- Contextual - Understanding "AMC" means "Annual Maintenance Contract" in our industry

- Consistent - The same term should translate the same way throughout

- Cost-effective - We couldn't spend thousands of dollars every time we added a few buttons

Simple math: 3,000 keys × 12 languages = 36,000+ translations needed. And growing every week.

We needed a system that was smart, scalable, and maintainable.

Three Attempts, Three Lessons

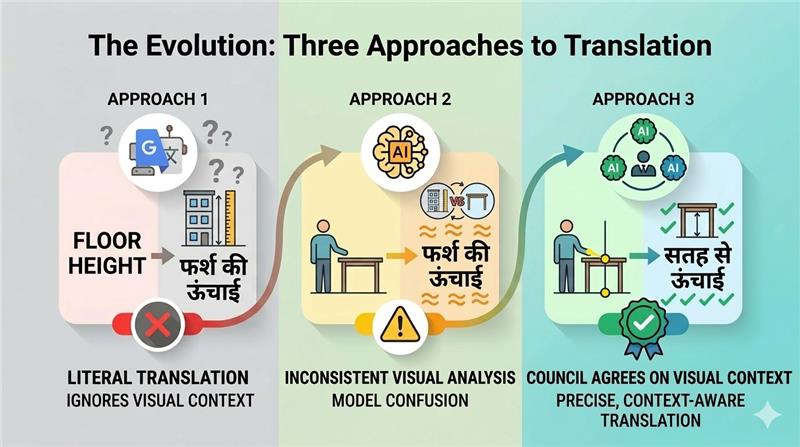

Attempt 1: Google Translate API - The Quick Fix That Wasn't

Our first approach was straightforward: use Google Translate API. Feed it English text, get back translations in 12 languages. Simple, fast, cheap.

It failed spectacularly.

The problem? Google Translate doesn't understand context.

Example 1: The AMC Disaster In the elevator industry, "AMC" stands for "Annual Maintenance Contract" - a critical business term every technician knows. Google Translate saw "AMC" and gave us translations that meant nothing to our users. The context was lost.

Example 2: The "Confirm" Confusion The English word "Confirm" was translated to Hindi as "पुष्टि करना" (pushtee karana) - which is technically correct but incredibly formal. It's like translating a casual "OK" button as "I hereby acknowledge and affirm."

No one talks like that. Our technicians were confused.

Lesson learned: Translation isn't just converting words from one language to another. It's about preserving meaning, context, and naturalness.

Attempt 2: Single LLM - Better, But Inconsistent

Next, we tried using modern AI language models like ChatGPT and Gemini. We wrote detailed prompts explaining:

- The elevator industry context

- Our user personas (technicians, managers, residents)

- The need for conversational, natural language

- Industry-specific terminology

This was significantly better! The AI understood context. It knew that technicians needed simple language and that "AMC" was industry jargon.

But new problems emerged:

- Inconsistency: The same English word would sometimes get different translations depending on the batch

- Quality variation: Sometimes too formal, sometimes too casual

- No validation: We had to trust one AI model to be right every time

- Different models, different results: GPT gave one answer, Gemini gave another - which was correct?

Lesson learned: Even advanced AI needs validation and cross-checking.

Attempt 3: LLM Council - The Breakthrough

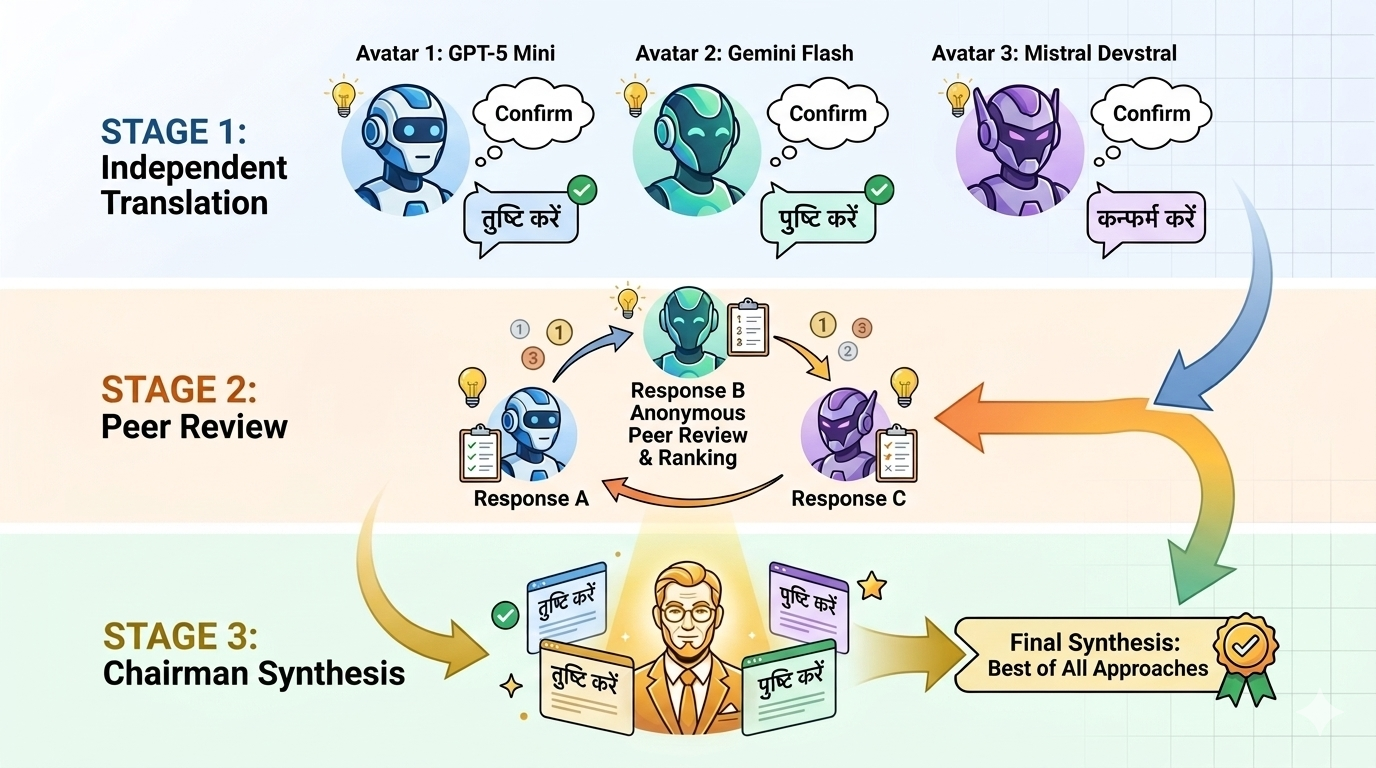

Then we discovered the concept pioneered by Andrej Karpathy (founder of OpenAI): LLM Council.

The idea is brilliant: Instead of asking one AI to translate, what if multiple AIs discussed the translation among themselves and reached a consensus?

Think of it like asking three expert translators to work on the same text, then having them critique each other's work, and finally having a senior translator synthesize the best version.

Here's how it works:

Stage 1: Independent Translation Three different AI models (GPT-5 Mini, Gemini 2.5 Flash, Mistral Devstral) each translate the same text independently. They don't see each other's work - just like three translators working in separate rooms.

Stage 2: Peer Review Each AI model then reviews the other translations (anonymously - they don't know which AI did which translation) and ranks them based on:

- How natural it sounds to native speakers

- Accuracy of meaning

- Cultural appropriateness

- Consistency

Stage 3: Final Synthesis A "Chairman" AI model reviews all three translations, reads all the peer reviews and rankings, and produces the final, optimized translation that combines the best elements.

Why this works:

When all three models agree on a translation, you can trust it - consensus validates quality. When they disagree, the peer review and ranking process surfaces which option is most natural and accurate.

Multiple perspectives catch errors that individual models miss. Collective intelligence consistently outperforms any single model.

This was our breakthrough. The quality of translations jumped dramatically. Natural phrasing. Correct context. Consistent terminology.

Building Our Solution: The Technical Challenges

While Karpathy's concept was proven, we had to build our own implementation from scratch in Node.js to meet ElevatorPlus's specific needs.

Challenge 1: Two Different Apps, Two Different Formats

Our mobile app stores translations in a simple flat structure, while our React admin panel uses nested hierarchies. We needed one system that could handle both formats seamlessly.

We built two entry points that share the same translation engine. The React version automatically flattens the nested structure for processing and reconstructs it afterward - users never see the difference.

Challenge 2: Only Translate What's Missing

Imagine you have 3,000 translated keys, and next week you add 100 new buttons and messages. Re-translating all 3,100 keys would be wasteful and expensive.

Our system intelligently compares the English source with each target language, identifies only the missing translations, and processes just those. This has saved us hundreds of dollars in API costs and countless hours of processing time.

Challenge 3: The Chunking Balancing Act

This was the trickiest challenge to solve.

We have over 3,000 translation keys. We quickly realized we couldn't process them all at once, but we also couldn't do them one by one. We needed to find the sweet spot.

Why not translate all 3,000 at once?

- AI models have context limits - they can't process that much at once

- Larger batches are more error-prone

- If something fails, you lose all your progress

- Inconsistencies creep in across such a large volume

Why not translate one key at a time?

- 3,000 individual API calls would take forever

- Costs would skyrocket

- You lose the benefit of context - related terms should be seen together

- The same word might get translated differently each time

Our solution: Smart chunking with 20 keys per batch

After extensive testing, we found that 20 keys per chunk is the perfect balance. Here's why it works:

Context preservation: Related UI elements often appear together - "Save," "Cancel," "Confirm" are usually near each other in our source files. Processing them in the same batch of 20 ensures the AI sees them together and maintains consistency.

Manageable size: 20 keys is small enough to process reliably without errors, but large enough that the AI understands the context and patterns.

Clear progress tracking: 3,000 keys divided into chunks of 20 means 150 clear milestones. You can see steady progress: chunk 45/150 completed, chunk 46/150 completed, etc.

Cost efficiency: Balances API costs with processing speed - not too many calls, not too few.

The magic happens within each chunk: When the LLM Council processes 20 keys together, all three AI models see those keys as a group. This means "Save Changes," "Cancel Changes," and "Discard Changes" get translated with the word "Changes" staying consistent across all three phrases.

Challenge 4: Resilience - When Things Go Wrong

In the real world, things fail. Network drops. API rate limits hit. Laptops go to sleep. Power outages happen.

When you're 2 hours into translating and on chunk 127 of 150, the last thing you want is to start over from scratch.

We built automatic progress saving that writes to disk after every single chunk. If the process crashes at chunk 87, you just restart the program and it automatically resumes from chunk 88. No manual intervention. No lost work.

We've had translation runs interrupted by everything from Wi-Fi drops to API outages, and every single time, we just restart and continue seamlessly.

Challenge 5: Porting to Node.js

The original llm-council was built in Python, but our entire ElevatorPlus stack runs on Node.js. Rather than introduce a Python dependency and deal with cross-language complexity, we rebuilt the entire system from scratch in JavaScript.

This gave us full control and seamless integration with our existing codebase.

How It Works in Practice

Let me walk you through a real translation run:

Scenario: We just added 450 new features and messages to our mobile app. We need to translate these to Hindi.

Step 1: The system compares English (source) with Hindi (target) and identifies exactly 450 missing translations.

Step 2: Those 450 keys are split into 23 chunks of 20 keys each (22 full chunks + 1 chunk with 10 keys).

Step 3: For each chunk, the LLM Council process runs:

- Three AI models independently translate those 20 keys

- Each model reviews and ranks the others' translations

- The Chairman AI synthesizes the best final translation

- Progress is saved to disk

Step 4: The process logs each step clearly, showing real-time progress as each chunk completes and saves.

Step 5: If anything fails (network issue, API limit), just restart - it picks up exactly where it left off.

Step 6: After all 23 chunks complete, the final translations are written to the output file.

Total time: About 45 minutes for 450 keys. The entire process is automatic and fault-tolerant.

The Results: Global Impact

Since implementing the LLM Council translation system, the impact has been transformative:

Quality Metrics:

- 95%+ accuracy on industry-specific terminology

- Zero complaints about translation quality from users

- Natural phrasing validated by native speakers in each country

- Consistent terminology across the entire platform

User Adoption:

- Technicians in Vietnam now complete work orders 40% faster

- German facility managers report the admin panel is "easy to navigate"

- Arabic-speaking safety inspectors file reports without confusion

- Hindi-speaking operators no longer need English-speaking colleagues nearby

Operational Efficiency:

- 12 languages fully supported and maintained

- 36,000+ translations generated and validated

- Weekly updates take just 10-15 minutes for incremental changes

- $800+ saved by only translating what's new, not re-translating everything

Real Stories:

A technician in France told us, "Finally, I don't have to guess what the buttons mean. Everything makes sense now."

A building manager in Germany said, "It feels like the software was built for us, not just translated."

That's the difference between word-for-word translation and contextual, natural localization.

What We Learned

Building this system taught us lessons that go beyond just translation:

1. Context is Everything

Generic translation tools fail because they don't understand your specific domain. Whether you're in elevator management, healthcare, finance, or e-commerce, you must provide rich context about your industry, your users, and your terminology.

2. Collective Intelligence Wins

One expert is good. Three experts debating and validating each other's work is better. The LLM Council approach consistently outperforms any single AI model.

3. The Right Chunk Size Matters

Too big, and you get errors and inconsistency. Too small, and you lose context and efficiency. Finding the balance is critical - for us, it was 20 keys per batch.

4. Build for Failure

Things will go wrong. Network issues. API limits. Power outages. Your system must save progress constantly and resume automatically. Don't make users start over when something fails.

5. Only Translate What's New

Re-translating everything every time is wasteful. Smart systems identify exactly what's missing and process only that.

6. Real Users Are the Best Validators

All the AI sophistication in the world doesn't replace watching a real Vietnamese technician use your app and smile because they understand every word.

Looking Ahead: Future Innovations

We're not done innovating. Here's what's next:

Short Term:

- Translation memory - Reuse common phrases across projects

- User feedback loop - Let native speakers suggest improvements

- A/B testing - Offer translation variants and track which users prefer

Long Term:

- Regional dialects - Arabic, Spanish, and Chinese have significant variations

- Real-time translation - Translate user-generated content like comments and messages

- Voice integration - Help technicians with verbal instructions in their language

Why This Matters

At Accucia, we believe software should work for everyone, regardless of what language they speak.

When a technician in Vietnam can confidently use our mobile app in Vietnamese, when a German manager navigates the admin panel effortlessly, when an Arabic-speaking inspector files reports without confusion - that's when we know we've succeeded.

Technology should bridge gaps, not create them. Language should never be a barrier to using great software.

The LLM Council translation system is now a core part of ElevatorPlus. It's proof that when you combine:

- Deep understanding of your users

- Cutting-edge AI technology

- Practical engineering

- Real-world testing

...you can solve problems that seemed impossible.

We've shared our journey and our approach because we believe in lifting the entire industry. If you're building global software and struggling with translation quality, we hope our experience inspires solutions.

The world is multilingual. Our software should be too.

About Accucia & ElevatorPlus

Accucia builds enterprise software solutions for the elevator industry. ElevatorPlus is a comprehensive SaaS platform used globally by elevator companies to manage operations, maintenance, compliance, and customer service. The platform serves thousands of users across multiple continents - all in their native languages.

Want to learn more about how we're using AI to solve real-world problems? Connect with us to discuss innovation in enterprise software.

Want to improve your software’s multilingual experience? Reach out to us today!